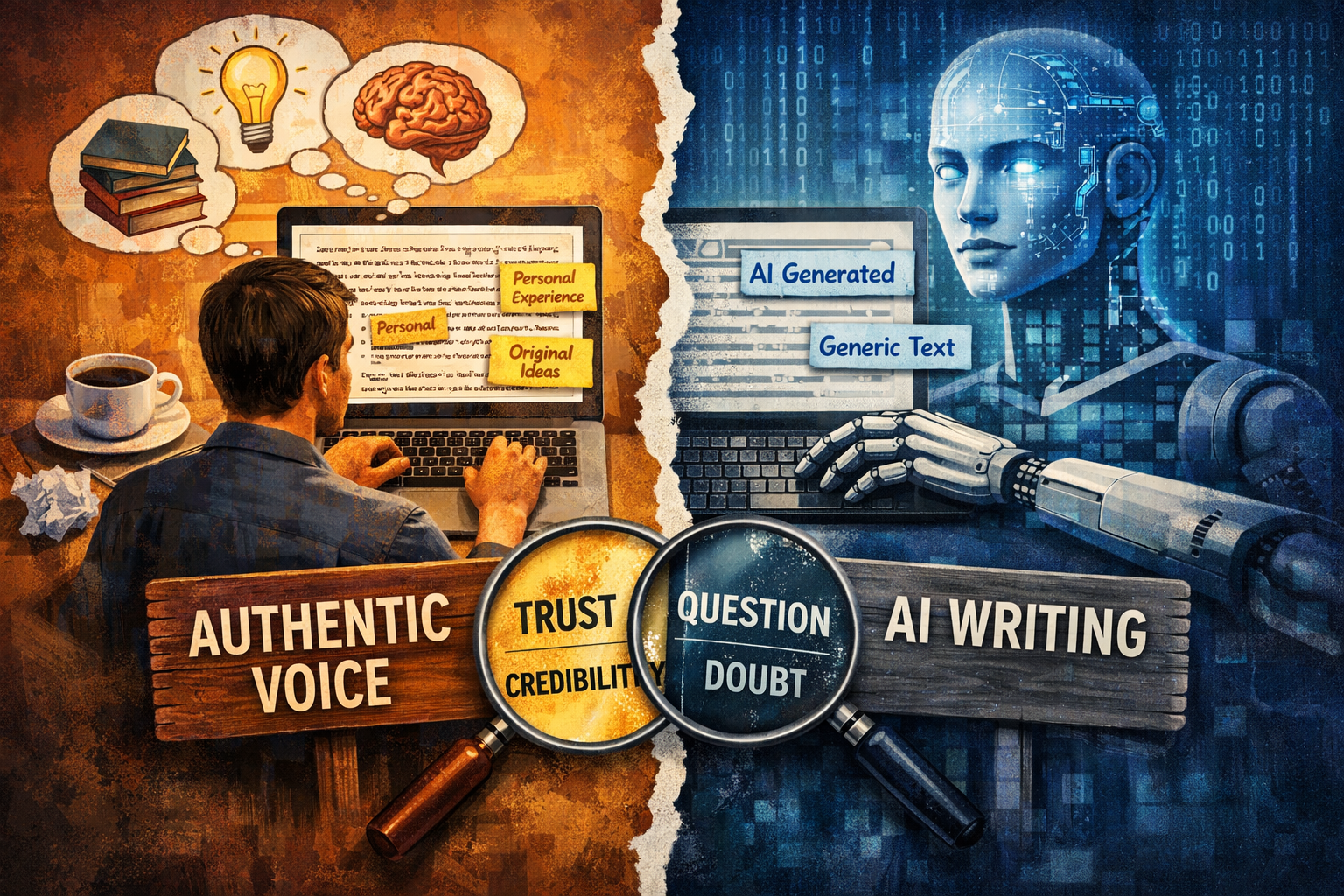

Throughout my career, I have spent an inordinate amount of time learning how to write, which eventually led to the development of my own voice. I believe that strong writing is mostly about practice, organization of thoughts and critical thinking. If an idea was communicated well, my credibility grew. A well-written article, story or even an email has an authenticity to it that can’t be matched by artificial intelligence.

As generative AI tools have become embedded in professional life, my assumption has evolved. AI can now generate polished articles, reports and commentary within seconds.

At the same time, in recent years many of us have had a subtle reaction when reading something online. The writing looks polished and structured, but something about it feels slightly distant. The question often lingers in the background: Did the author think this through themselves, or did a tool assemble it for them? That uncertainty can quickly shape how we interpret the message. As we all know, there are key indicators that everyone recognizes as AI-generated writing. The surface quality is often impressive, yet I have noticed that readers sometimes respond differently when they suspect a piece was largely generated by AI rather than shaped through the author’s own thinking.

The concern is not the use of AI itself. Myself and many other professionals use it to organize ideas, summarize research or test alternative ways of explaining complex issues. I advocate for its use to expedite the time it takes to write the first draft. The credibility question emerges when AI begins to replace the analytical work and critical thinking that typically sits behind thoughtful writing. In leadership environments especially, written communication has long served as a signal of judgement, experience and intellectual effort. When the thinking behind the words becomes uncertain, readers may start to question how much of the perspective actually belongs to the author.

Research is beginning to explore this dynamic. A 2024 experimental study published in the Journal of Intelligence found that when readers were informed that a piece of writing had been generated by AI, both the perceived credibility of the message and the credibility of the author declined, even though the text itself was identical (Jia et al., 2024). The researchers also observed lower ratings for perceived expertise and authenticity when AI authorship was disclosed.

Additional work points in a similar direction. A recent study examining audience responses to AI-assisted communication reported that trust in the author tended to decrease once readers learned that generative AI had contributed to the content, even when the overall quality of the writing remained high (Zhou & Lee, 2025). The reaction appeared to reflect concerns about authenticity and intellectual effort rather than concerns about grammar or coherence. Maybe that might change over time, as AI becomes more and more embedded into our written work and as more university graduates enter the workforce who have only been exposed to AI-generated content. They likely won't have any other frame of reference and will accept that most content is probably generated by AI.

These findings align with what many people are observing in practice. AI-generated writing often carries recognizable patterns: generalized statements that apply broadly across contexts, highly structured paragraphs and language that is technically correct yet detached from lived experience. In contrast, writing that reflects genuine engagement with a topic typically includes personal examples, interpretation and judgement grounded in real situations. Readers often respond differently to those signals.

Canadian research organizations have also begun examining the broader implications of generative AI for authorship and trust. Institutions such as the Schwartz Reisman Institute for Technology and Society at the University of Toronto and the Canadian Institute for Advanced Research (CIFAR) have noted that generative AI raises new questions about authenticity, attribution, and intellectual responsibility in professional communication. These discussions reflect a growing awareness that credibility may depend not only on what is written, but also on how readers believe the work was produced.

AI will likely remain a valuable tool for drafting, organizing information and accelerating early stages of thinking. The challenge for professionals is maintaining ownership of the interpretation and perspective that give writing its credibility. One practical approach is to treat AI as an assistant rather than the primary author. When the final piece reflects genuine thinking, examples, and experience, readers are more likely to see the work and the author as both thoughtful and trustworthy. I believe the written word is too valuable to be fully replaced by AI and encourage people to continue to produce their own "original" thoughts and perspectives. We will see where the next few years take us and I hope the pendulum will swing back closer to the way it was pre-AI. It will be interesting to see whether credibility eventually adapts to AI-assisted writing, or whether readers will continue looking for signs that genuine thinking sits behind the words.